Introduction

Starting from February 1, 2024, Amazon Web Services (AWS) has announced that it will introduce charges for IPv4 addresses ($0.005 per IP per hour for all public IPv4 addresses), which is a clear signal of the growing scarcity of these resources. The implementation of these charges means that AWS users will need to pay for any additional IPv4 addresses they require, regardless of whether they are in use or not.

To mitigate these additional costs and ensure a future-proof infrastructure, AWS users are encouraged to transition to IPv6. IPv6 is the latest Internet Protocol version that offers a significantly larger address space than IPv4, which is necessary to meet the demands of the growing number of devices that require an Internet connection.

What it means

The transition to IPv6 is, therefore, a crucial move for businesses that rely on AWS to support their operations. By switching to IPv6, they can not only address the issue of address scarcity but also enjoy the benefits of a more advanced and secure Internet Protocol. AWS has provided comprehensive documentation and resources to help users make this transition smoothly, and users are encouraged to take advantage of these resources to ensure a seamless migration.

IPv6 vs IPv4

IPv4 and IPv6 are two versions of the Internet Protocol that are used to assign unique addresses to devices connected to the Internet. IPv4 has been the backbone of the internet for decades and has been instrumental in enabling the growth of the internet. However, the increasing demand for internet-connected devices is quickly depleting the IPv4 address pool.

IPv6 is the newest version of the Internet Protocol, and it offers a staggering 340 undecillion addresses, which is more than enough to meet the growing demand for internet-connected devices. The adoption of IPv6 is crucial because it provides a much larger address space than IPv4, which has a limit of 4.3 billion addresses.

Apart from the sheer capacity, IPv6 also enhances routing, network auto-configuration, security features, and overall support for new services and applications. IPv6 also supports multicast communication, which enables efficient distribution of data to multiple devices. This feature is not adequately supported in IPv4.

Adopting IPv6 is not only necessary to meet the growing demand for internet-connected devices, but it also provides several benefits that IPv4 cannot offer. IPv6 is more efficient, secure, and scalable, which makes it the best choice for the future of the internet.

Advantages of IPv6

IPv6, the successor to IPv4, provides several advantages in terms of network infrastructure.

1. Virtually unlimited address space.

One of the most significant benefits of IPv6 is its virtually unlimited address space, which allows for an enormous number of unique IP addresses. This feature is particularly important as we continue to add more devices to the internet, including smart home appliances, sensors and other IoT devices.

2. Enhanced routing and network auto-configuration capabilities

IPv6 also offers enhanced routing and network auto-configuration capabilities, which simplifies the process of setting up and maintaining network devices. This feature allows for more efficient and flexible network management, making it easier to expand and adapt to changing business needs.

3. Improved security features

IPv6 also includes several security features that are designed to protect against various types of cyber threats. For instance, it has built-in support for IPsec, an encryption protocol that provides end-to-end security for data transmitted over the internet. Additionally, IPv6 includes features such as neighbor discovery and router advertisement that help prevent network attacks, such as spoofing and man-in-the-middle attacks.

4. Better support for new services and applications

IPv6 better supports new services and applications that require higher bandwidth and lower latency. It provides improved support for real-time communication, multimedia streaming, and online gaming. These features make it easier for businesses to develop and deploy new applications that can help them stay ahead of the competition.

5. Future-proofing operations for sustained growth and innovation

IPv6 is future-proof, which means that it can support the growing demands of the internet and the evolving needs of businesses. It provides a solid foundation for sustained growth and innovation, ensuring that networks remain reliable and efficient for years to come.

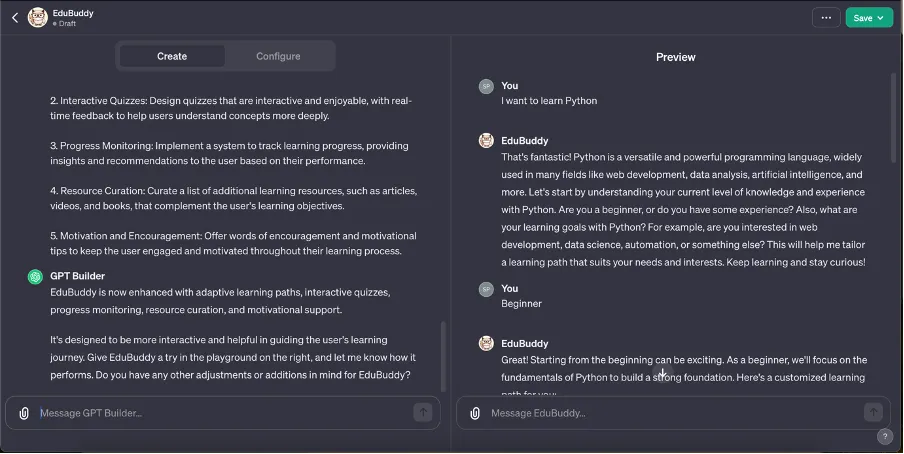

Understanding the Transition: Step-by-Step Guide:

1. Assessing Your Current Environment:

- Identify all AWS resources using IPv4.

- Gain a comprehensive understanding of the components requiring transition.

2. IPv6 Capability Check:

- Ensure compatibility of applications, services, and infrastructure with IPv6.

- Consider necessary updates or replacements for seamless integration.

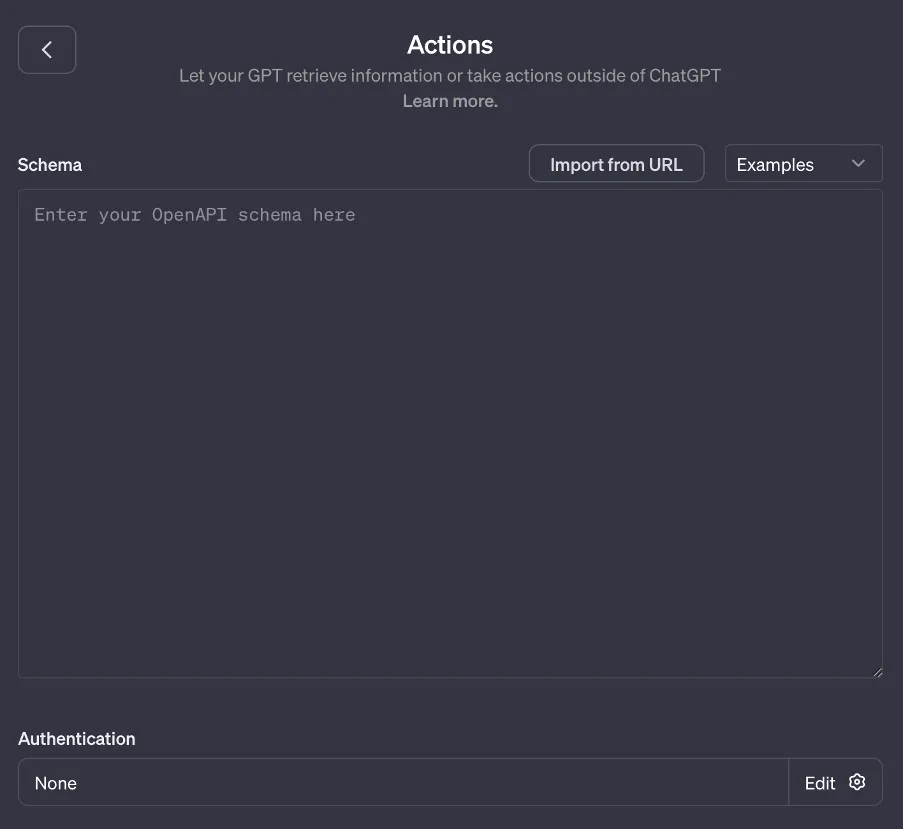

3. VPC Configuration:

- Access the AWS Management Console.

- Navigate to the VPC Dashboard.

- Select your VPC.

- In the "Actions" menu, choose "Edit CIDRs."

- Add an IPv6 CIDR block.

- Update your routing tables to include IPv6 routes.

4. Subnet Modifications:

- In the VPC Dashboard, select "Subnets."

- Choose a subnet, and in the "Actions" menu, select "Edit CIDRs."

- Add an IPv6 CIDR block to the subnet.

- Ensure your IPv6 addressing plan aligns with network requirements.

5. Security Group Adjustments:

- Navigate to the EC2 Dashboard.

- Choose "Security Groups" from the left-hand menu.

- Select the security group associated with your instances.

- Edit inbound and outbound rules to allow IPv6 traffic.

- Save the changes.

6. Instance Configuration:

- In the EC2 Dashboard, select "Instances."

- Identify and choose the target instance.

- Stop the instance if it's running.

- Click on "Actions" and navigate to "Networking," then select "Manage IP Addresses."

- In the IPv6 Addresses section, assign an IPv6 address or enable auto-assignment.

- Save the changes and restart the instance.

7. Testing and Validation:

- Use AWS tools like VPC Reachability Analyzer to validate IPv6 connectivity.

- Conduct thorough application testing to ensure seamless IPv6 integration.

- Address and resolve any identified issues during the testing phase.

8. DNS Updates:

- Access your DNS provider's dashboard.

- Update DNS records to include IPv6 addresses.

- Ensure clients and users can connect seamlessly using either protocol.

9. Monitoring and Optimization:

- Implement CloudWatch for monitoring IPv6-enabled resources.

- Analyze performance data to optimize configurations for efficient operation.

Conclusion:

Transitioning from IPv4 to IPv6 on AWS is a strategic move to future-proof your infrastructure against potential cost increases and support long-term growth. While the process may appear intricate, careful planning, thorough testing, and the right approach can facilitate a smooth and efficient transition. Embrace the advantages of IPv6 and position your business ahead in the ever-evolving digital landscape.